As you know, Nikkei Stock Average called Nikkei and Dow Jones Industrial Average called by DJIA are both famous stock market indexes. We can get daily data of them easily from below sites.

- DJIA https://fred.stlouisfed.org/series/DJIA

- Nikkei 225: Go to https://indexes.nikkei.co.jp/nkave/index/profile?idx=nk225, and click "日次データ(CSV)"

This topic introduces how to use hive tables with Nikkei and DJIA.

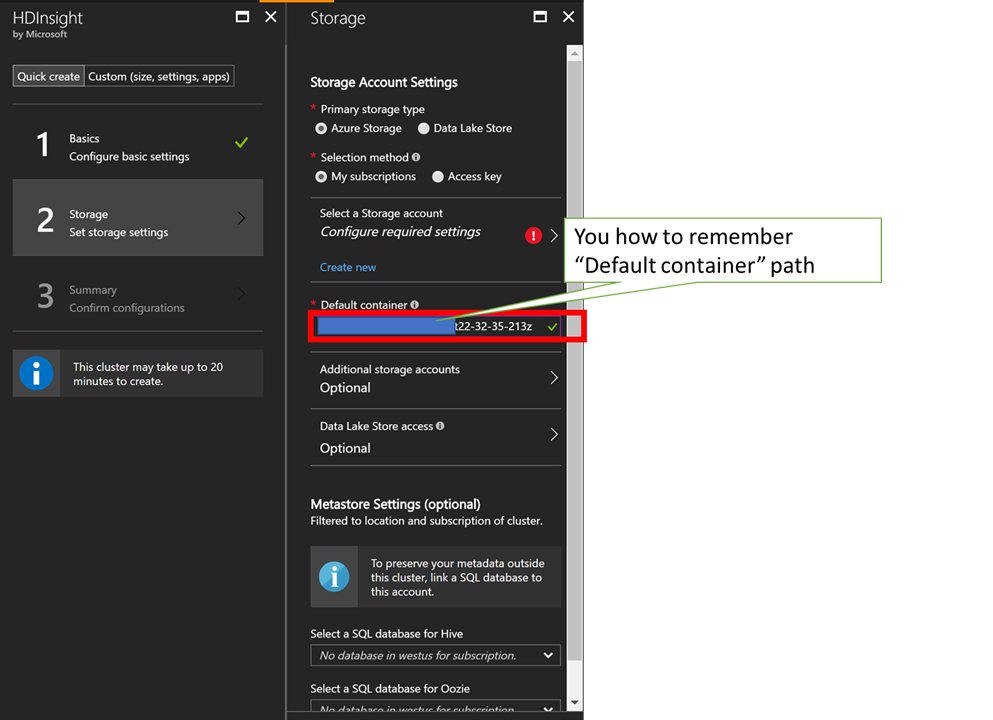

Create a HDInsight cluster

Go to Azure Portal and create new HDInsight cluster! In this sample, I choose HDInsight Spark Cluster, but it's not matter to choose other component which are available to use Hive. Please create or associate a Azure Storage to your cluster like below when you create it, because CSV data will be stored into the Azure Storage.

Create Nikkei and DJIA Hive Tables

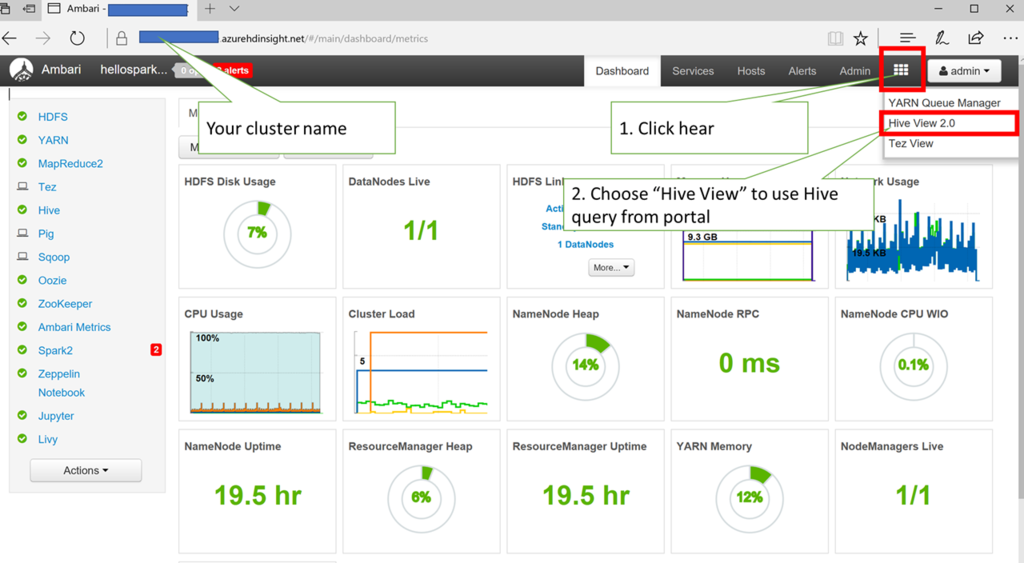

Go to cluster portal called Ambari, and its URL is https://"your cluster name".azurehdinsight.net/. Click top right side button on the portal and choose "Hive View" to use Hive query.

And execute below Hive query in the portal.

CREATE DATABASE IF NOT EXISTS FINANCEDB;

DROP TABLE FINANCEDB.DJIATABLE;

CREATE EXTERNAL TABLE FINANCEDB.DJIATABLE

(

`DATE` STRING,

`DJIA` DOUBLE

) ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' lines terminated by '\n' STORED AS TEXTFILE LOCATION 'wasbs:///financedata/DJIA.csv' TBLPROPERTIES("skip.header.line.count"="1");

DROP TABLE FINANCEDB.NIKKEITABLE;

CREATE EXTERNAL TABLE FINANCEDB.NIKKEITABLE

(

`DATE` STRING,

`NIKKEI` DOUBLE,

`START` DOUBLE,

`HIGHEST` DOUBLE,

`LOWEST` DOUBLE

) ROW FORMAT SERDE 'org.apache.hadoop.hive.serde2.OpenCSVSerde'

WITH SERDEPROPERTIES (

"separatorChar" = ",",

"quoteChar" = "\""

) STORED AS TEXTFILE LOCATION 'wasbs:///financedata/nikkei_stock_average_daily_jp.csv' TBLPROPERTIES("skip.header.line.count"="1");Now, you can watch your hive table names in your Azure Storage which you have associated in your HDInsight cluster.

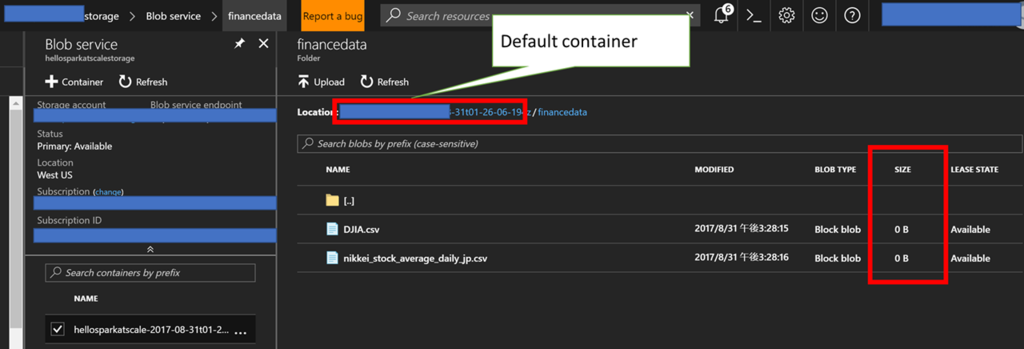

Note those BLOB files are size zero. You should avoid to upload data before executing above queries, because you will get below errors when you execute the queries if you upload them before it.

java.sql.SQLException: Error while processing statement: FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.DDLTask. MetaException(message:wasbs://helloxxxxxxxxxxxxxx-2017-08-31t01-26-06-194z@hellosparyyyyyyyyyy.blob.core.windows.net/financedata/DJIA.csv is not a directory or unable to create one)

After executing CREATE TABLE queries, upload CSV data into the Azure Storage and override existing BLOB files like below.

Confirm Hive Tables data

Go to "Hive View" in Ambari again, and execute below queries separately to avoid override. You can get some result data if your setup is correct.

SELECT * FROM FINANCEDB.DJIATABLE LIMIT 5; SELECT * FROM FINANCEDB.NIKKEITABLE LIMIT 5;

You should check "TEXTFILE LOCATION" path and "default container" for HDInsight cluster in the Azure Storage if you can't get any data from the queries. Full path of a CSV file is "https://hellosparyyyyyyyyyy.blob.core.windows.net/helloxxxxxxxxxxxxxx-2017-08-31t01-26-06-194z/financedata/DJIA.csv, but some people confuse "default container" path.

Extract data as joined one using Nikkei and DJIA Hive tables

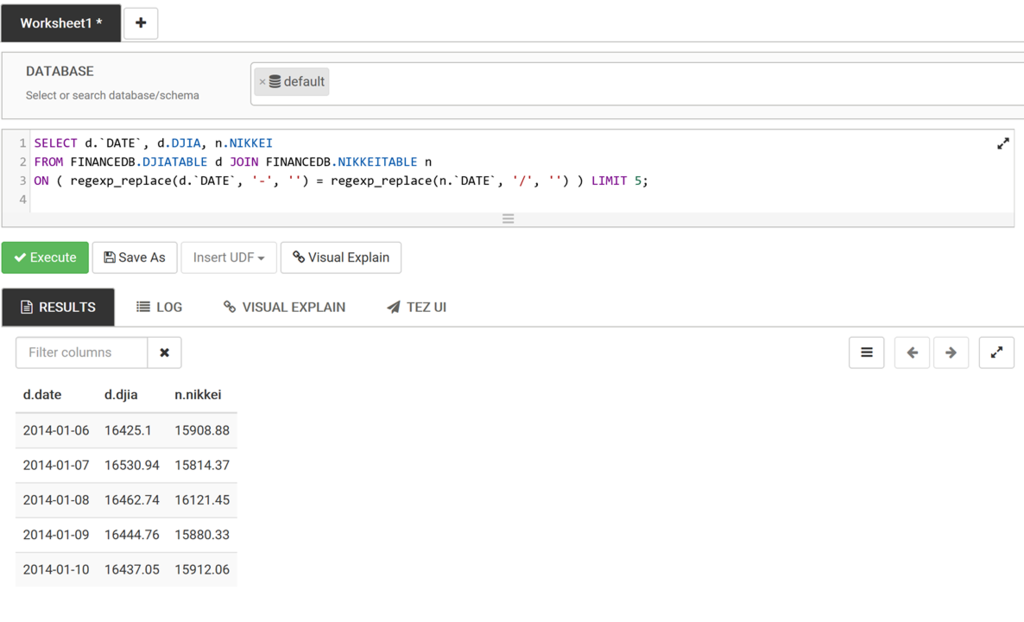

Execute below query to get data with joind one, because Nikkei CSV file expresses date as "2014/01/06" and DJIA one expresses date as "2013-12-16".

SELECT d.`DATE`, d.DJIA, n.NIKKEI FROM FINANCEDB.DJIATABLE d JOIN FINANCEDB.NIKKEITABLE n ON ( regexp_replace(d.`DATE`, '-', '') = regexp_replace(n.`DATE`, '/', '') ) LIMIT 5;

You can view below query result if you have setup correctly, but please note Nikkei is expressed as "YEN" and DJIA is expressed as "Dollar". Please improve this sample to express same concurrency if it's possible!

References

- How to load CSV data with enclosed by double quotes and separated by tab into HIVE table? https://stackoverflow.com/questions/30637536/how-to-load-csv-data-with-enclosed-by-double-quotes-and-separated-by-tab-into-hi

- how to replace characters in hive? https://stackoverflow.com/questions/18090481/how-to-replace-characters-in-hive